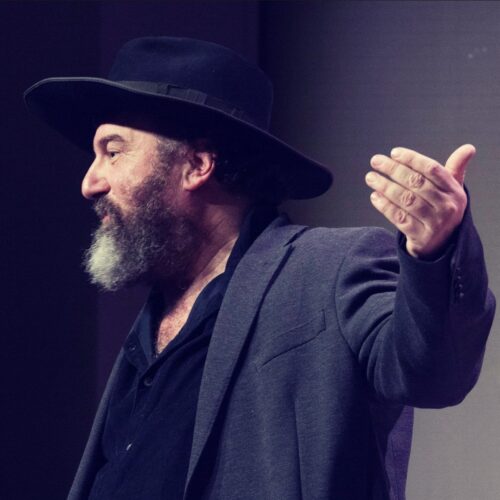

George Dvorsky on Transhumanism and the Singularity

Socrates / Podcasts

Posted on: August 26, 2010 / Last Modified: December 21, 2021

Podcast: Play in new window | Download | Embed

Subscribe: RSS

In this edition of Singularity Podcast, I had the pleasure of speaking with prominent Canadian transhumanist and animal rights advocate George Dvorsky. George is both a passionate and fascinating interlocutor and, even though I spend over 1h 15 min interviewing him, I feel that I could have easily spent double that time while still remaining highly interested in what he has to say. (So do not be surprised if I invite him for another podcast.)

In this edition of Singularity Podcast, I had the pleasure of speaking with prominent Canadian transhumanist and animal rights advocate George Dvorsky. George is both a passionate and fascinating interlocutor and, even though I spend over 1h 15 min interviewing him, I feel that I could have easily spent double that time while still remaining highly interested in what he has to say. (So do not be surprised if I invite him for another podcast.)

Just one of the thoughts that I will personally take away from my conversation with Dvorsky:

Mass extinction is the simplest explanation for why we are seeing an uncolonized galaxy.

George Dvorsky’s Short Bio: Canadian futurist, ethicist, and animal rights advocate, George Dvorsky has written and spoken extensively about the impacts of cutting-edge science and technology—particularly as they pertain to the improvement of human performance and experience. He currently serves on the Board of Directors for the Institute for Ethics and Emerging Technologies and has a popular blog called Sentient Developments.

Related articles by Zemanta

- Aubrey de Grey’s Singularity Podcast: Longevity Escape Velocity Maybe Closer Than We Think (singularityblog.singularitysymposium.com)

- Michael Anissimov’s Podcast: Singularity Without Compromise (singularityblog.singularitysymposium.com)

- Singularity Podcast: Barry Ptolemy on Transcendent Man (singularityblog.singularitysymposium.com)

- Singularity Podcast: Vernor Vinge and Cory Doctorow on NPR (singularityblog.singularitysymposium.com)

- James Harvey’s Singularity Podcast: We are Singularia (singularityblog.singularitysymposium.com)

- Jason Silva on Singularity Podcast: Let Your Ideas Be Noble, Poetic, and Beautiful (singularityblog.singularitysymposium.com)