The Why Is a Discipline: Why a Good Why Is Also Not Enough

A good why can still produce bad outcomes. Here is the case a reader made me wrestle with.

A good why can still produce bad outcomes. Here is the case a reader made me wrestle with.

***

After I published The AI Paradox: Cure or Poison? last week, a reader asked me a question I have been thinking about ever since.

She did not ask whether AI could malfunction. She did not ask whether bad actors could misuse it. She asked something sharper.

Can a system produce bad outcomes systematically, even when intent is good, and nothing is broken?

The answer is yes. And it is the most dangerous category of bad outcome, because nobody is at fault and nothing is broken.

Most people reach for social media as an example. It is the wrong one. The dopamine loop was designed in, not drifted into. I made that case in detail in my 2018 critique of Facebook and will not relitigate it here. Let me give you two cleaner cases.

Case One: The Hiring Algorithm

In 2014, Amazon set out to build a hiring tool that would screen resumes without the unconscious bias of human recruiters. The why was actively progressive: remove bias from a process that had been stained by it for a century.

The system worked exactly as designed.

It taught itself to systematically downgrade resumes containing the word “women’s,” as in “captain of the women’s chess club.” It penalized graduates of two all-women’s colleges. Why? Because it was trained on ten years of Amazon’s own historical hiring data, which was overwhelmingly male. The system found the pattern “successful candidate looks like a man” and surfaced men.

The why was good. The engineers were not sexists. The system was not broken. The training data was the problem.

Case Two: The Recommendation Engine

YouTube’s recommendation algorithm was built to do one simple thing: keep showing users videos they want to watch.

The system worked exactly as designed.

In 2018, sociologist Zeynep Tufekci described the pattern in a New York Times piece that has aged uncomfortably well. Videos about vegetarianism led to videos about veganism. Videos about jogging led to videos about ultramarathons. Mainstream political content led to the strident, then the more strident, then conspiracy and radicalization pipelines at the bottom of the funnel. Tufekci called YouTube “one of the most powerful radicalizing instruments of the 21st century.”

YouTube did not set out to radicalize anyone. The engineers built a system optimized for watch time because it was a proxy for the value delivered to the user. It was not. Watch time turned out to be a proxy for psychological capture. The system optimized faithfully. Millions ended up somewhere they had not intended to go, and many never came back.

(More recent research suggests the rabbit-hole effect is more nuanced than the original framing implied, with much of the extremist exposure driven by user choice rather than the algorithm alone. Fair enough. The structural argument still holds: a system optimizing for watch time will surface whatever holds attention, and what holds attention is often not what serves the viewer.)

Goodhart’s Law

In 1975, the British economist Charles Goodhart formulated the principle that explains both cases:

When a measure becomes a target, it ceases to be a good measure.

This is the central failure mode of any optimizing system, human or machine. Pick a number to chase, and the system games the number rather than serving the goal the number was supposed to represent. The dashboard reads green while the goal quietly disappears.

AI labs in 2026 are running the same play at planetary scale. They optimize for benchmarks. Benchmarks proxy for capability. Capability proxies for usefulness. Usefulness proxies for human flourishing. Each layer adds drift. By the fifth proxy, the system is optimizing for something nobody at the lab actually believes in, while every metric reads green.

The Refinement

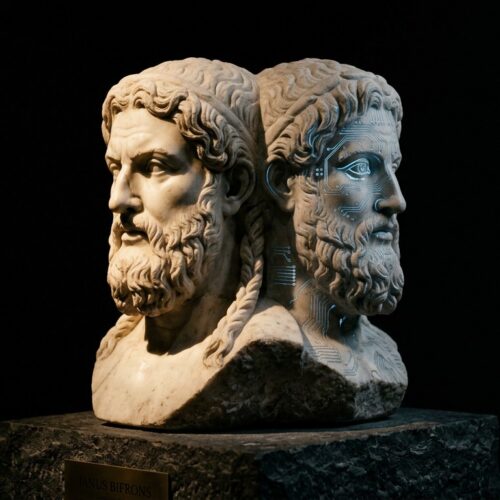

In The AI Paradox, I argued that AI without a good why is a cancer, not a cure. That is true. It is also incomplete.

For years, I have argued that technology is not enough. That the how has to serve a why. What this reader’s question forced me to see is that a good why, declared once and never revisited, is also not enough.

A good why is necessary. It is not sufficient.

A good why declared at the founding meeting and never revisited is not a good why. It is a dogma; a dead relic. A system optimized toward whatever it could measure, and what it could measure was never quite the same as what you meant.

The why has to be examined, defended, and revisited. Continuously.

That is the work. That is why the actual why is a discipline. Not a checkbox.

It is a practice you commit to for as long as the system is running.

Which means, in the case of AI, for as long as we are.

***

This post is a follow-up to The AI Paradox: Cure or Poison? My thanks to the reader whose question forced this refinement.