Human Rights for Artificial Intelligence: What is the Threshold for Granting (Human) Rights?

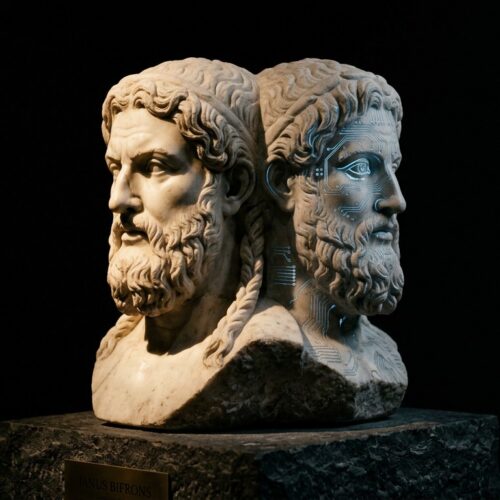

It is the year 2045. Strong artificial intelligence (AI) is integrated into our society. Humanoid robots with non-biological brain circuitries walk among people in every nation. These robots look like us, speak like us, and act like us. Should they have the same human rights as we do?

It is the year 2045. Strong artificial intelligence (AI) is integrated into our society. Humanoid robots with non-biological brain circuitries walk among people in every nation. These robots look like us, speak like us, and act like us. Should they have the same human rights as we do?

The function and reason of human rights are similar to the function and cause of evolution. Human rights help develop and maintain functional, self-improving societies. Evolution perpetuates the continual development of functional, reproducible organisms. Just as humans have evolved, and will continue to evolve, human rights will continue to evolve as well. Assuming strong AI will eventually develop strong sentience and emotion, the AI experience of sentience and emotion will likely be significantly different from the human experience.

But is there a definable limit to the human experience? What makes a human “human”? Do humans share a set of traits which distinguish them from other animals?

Consider the following so-called “human traits” and their exceptions:

Emotional pleasure / pain – People with dissociative disorder have a disruption or elimination of awareness, identity, memory, and / or perception. This can result in the inability to experience emotions.

Physical pleasure / pain – People with sensory system damage may have partial or full paralysis. Loss of bodily control can be accompanied by inability to feel physical pleasure, pain, and other tactile sensations.

Reason – People with specific types of brain damage or profound mental retardation may lack basic reasoning skills.

Kindness – Those with specific types of psychosis may be unable to feel empathy, and in turn, are unable to feel and show kindness.

Will to live – Many suicidal individuals lack the will to live. Some people suffering from severe depression and other serious mental disorders also lack this will.

So what is the human threshold for granting human rights? Consider the following candidates:

A person with a few non-organic machine body parts.

A human brain integrated into a non-organic machine body.

A person with non-biological circuitry integrated into an organic human brain.

A person with more non-biological computer circuitry mass than organic human brain mass.

The full pattern of human thought processes programmed into a non-biological computer.

A replication of a human thought processes into an inorganic matrix.

Which of these should be granted full “human rights”? Should any of these candidates be granted human rights while conscious and cognitive non-human animals (cats, dogs, horses, cows, chimpanzees, et cetera) are not? When does consciousness and cognition manifest within a brain, or within a computer?

If consciousness and, in turn, cognition are irreducible properties, these properties must have thresholds, before which the brain or computer is void of these properties. For example, imagine the brain of a developing human fetus is non-conscious one day, then the next day has at least some level of rudimentary consciousness. This rudimentary consciousness, however, could not manifest without specific structures and systems already present within the brain. These specific structures and systems are precursors to further developed structures and systems, which would be capable of possessing consciousness. Therefore, the precursive structures which will possess full consciousness – and the precursors to consciousness itself – must not be irreducible. A system may be more than the sum of its parts, but it is not less than the sum of its parts. If consciousness and cognition are not irreducible properties, then all matter must be panprotoexperientialistic at the least. Reducible qualities are preserved and enhanced through evolution. So working backward through evolution from humans to fish to microbes, organic compounds, and elements, all matter, at minimum, exists in a panprotoexperientialistic state.

Complex animals such as humans posses sentience and emotion through the evolution of internal stimuli reaction. Sentience and emotion – like consciousness – are reproduction-enhancing tools which have increased in complexity over evolutionary time. An external stimulus will trigger an internal stimulus (emotional pleasure and pain). This internal stimulus, coupled with survival-enhancing reactions to it, will generally increase the likelihood of reproduction. Just as survival-appropriate reactions to physical pleasure and pain increase our likelihoods of reproduction, survival-appropriate reactions to emotional pleasure and pain also increase our likelihoods of reproduction.

Obviously, emotions may be unnecessary to continue reproduction in a post-strong AI world. But they will still likely be useful in preserving human rights. We don’t yet have the technology to prove whether a strong AI experiences sentience. Indeed, we don’t yet have strong AI. So how will we humans know whether a computer is strongly intelligent? We could ask it. But first we have to define our terms, and therein exists the dilemma. Paradoxically, strong AI may be best at defining these terms.

Definitions as applicable to this article:*

Human Intelligence – Understanding and use of communication, reason, abstract thought, recursive learning, planning, and problem-solving; and the functional combination of discriminatory, rational, and goal-specific information-gathering and problem-solving within a Homo sapiens template.

Artificial Intelligence (AI) – Understanding and use of communication, reason, abstract thought, recursive learning, planning, and problem-solving; and the functional combination of discriminatory, rational, and goal-specific information-gathering and problem-solving within a non-biological template.

Emotion – Psychophysiological interaction between internal and external influences, resulting in a mind-state of positivity or negativity.

Sentience – Internal recognition of internal direct response to an external stimulus.

Human Rights – Legal liberties and considerations automatically granted to functional, law-abiding humans in peacetime cultures: life, liberty, the pursuit of happiness.

Strong AI – Understanding and use of communication, reason, abstract thought, recursive learning, planning, and problem-solving; and the functional combination of discriminatory, rational, and goal-specific information-gathering and problem-solving above the general human level, within a non-biological template.

Panprotoexperientialism – Belief that all entities, inanimate as well as animate, possess precursors to consciousness.

* Definitions provided are not necessarily standard to intelligence- and technology-related fields.

About the Author:

CMStewart is a psychological horror novelist, a Singularity enthusiast, and a blogger. You can follow her on Twitter @CMStewartWrite or go check out her blog CMStewartWrite.

CMStewart is a psychological horror novelist, a Singularity enthusiast, and a blogger. You can follow her on Twitter @CMStewartWrite or go check out her blog CMStewartWrite.