Robotic Warriors or Warriors Who are Robots?

Michelle A. Janeson / Op Ed

Posted on: March 2, 2012 / Last Modified: March 2, 2012

Is there a difference between the two, and where does human moral responsibility lie with each?

“Having reached the station in a driverless taxi –

“Having reached the station in a driverless taxi –

A robot will seize us and our luggage –

And deposit us in a train –

With a driverless engine.

We shall get a mechanically supplied meal in the restaurant car –

And be automatically evicted at our destination”

W.K Haselden 1928

Automation and artificial intelligence have been a concern since the early part of the last century. However, anxieties about the ability of machines to ‘take over’ and blur the margins between human and machine interaction have never been stronger than they are currently, with some of the fictionalized Hollywood visions becoming ever closer to being realized. Films like I, Robot (2004) and A.I. (2001) explore our darkest fears about artificial intelligence and robotics. Now that they are approaching the move from fantasy into fact, responsible researchers have arrived at the point of asking for guidelines to follow with the future of AI and robotics.

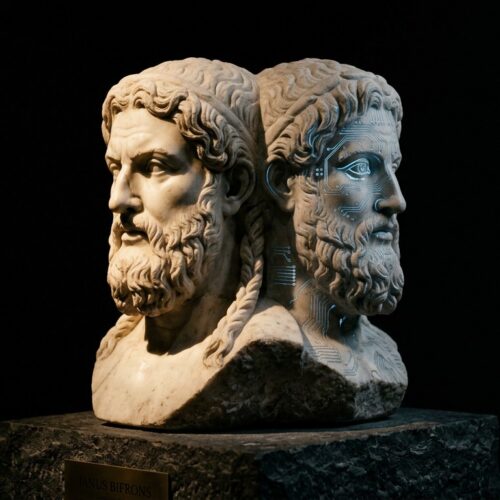

This week a new consultation was announced in the UK, by the Nuffield Council on Bioethics, tasked with examining the possible moral and ethical questions being raised by advancing technology. It is clear that boundaries are becoming blurred when experiments involving the implantation of microchips into human brains, which have to ability to affect behavior remotely, are being successfully performed. And they are. The chairman of the Working Party examining these issues is Professor Thomas Balwin, who is in no doubt that these issues need to be examined. He notes that these advances, “…challenge us to think carefully about fundamental questions to do with the brain: what makes us human, what makes us an individual and how and why we think and behave in the way we do.”

Robot Warriors

The military uses of advanced AI and robotic technology seem to have been widely accepted, without much soul searching. US drones, or Unmanned Aerial Systems, have been operating in Iraq and Afghanistan for some years, and despite unease have been remarkably successful. The avoidance of putting boots on the ground is more than a monetary concern here. The US military spent $5 billion dollars a year on drones, fleets of which have increased hugely in the last decade. With their effectiveness at remaining undetected and ability to wait until the optimum moment to strike, a counter argument to those who are questioning the morality of remote killing, is that they are in fact highly accurate, largely hitting only military targets, and reducing overall disruption to a population – far more than an invading force and inevitable civilian casualties.

Is it Moral?

The consensus so far seems to be that the use of robotic or remote instruments of war do not contravene the Laws of Armed Conflict (LAC) based on the Geneva Convention. The LAC state that if an attack is launched, the weapons’ system – be it man or machine – must be able to verify that the target is a legitimate one, as in military and not civilian. Drones can do this admirably well, since they have the ability to observe a suspected target for up to 18 hours at a time, gathering data to ensure the accuracy of the intelligence received. Furthermore, the Convention goes on to state that the military must minimize civilian harm and avoid disproportionate collateral damage. Drones score high here again.

Clinical Killing

Or do they? The fact that these actions can be performed with such clinical accuracy is the precise reason that some ethicists and civil rights advocates are very uneasy. They argue that it is precisely the boots on the ground element of war which limits it, and creates incentives to withdraw or desist further aggression. If the means of death can be delivered by a pilot at a computer terminal, who can kill and then walk away to have a coffee-break just a few minutes later, then there is a fundamental disconnect between man and his actions, which puts the entire ethical issues of war on a different level. If nations can deliver bombs with no risk to the lives to their own citizens, then why is there any reason to ever stop killing?

Without body bags coming home, and the tears of grieving widows and mothers, where is the political pressure to seek peace? These factors act as fundamental brakes on warfare, and with the rise of robotics and robotic warriors there seems to be an entirely different set of ethical arguments to be made – above and beyond those of the Geneva Convention. Times have changed and morality needs to change with it. But with the war against terrorism waged from the air above the mountains of Pakistan – where is the incentive to take a closer look at these issues going to come from?

Perhaps it is time we all took time to explore the philosophy and ethics of our increasingly smart and autonomous military machines: Do we want robotic warriors or warriors who are robots?

About the Author:

Michelle A. Janeson is a freelance writer and terrified of the future. She works within the system, not in order to destroy it from the inside but for cowardly safety, and works for balance transfer offers site in the UK.