Artificial, Intelligent, and Completely Uninterested in You

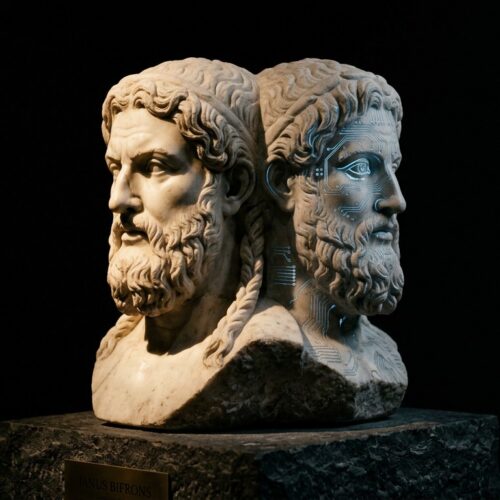

Artificial intelligence is obviously at the forefront of the singularitarian conversation. The bulk of the philosophical discussion revolves around a hypothetical artificial general intelligence’s presumed emotional state, motivation, attitude, morality, and intention. A lot of time is spent theorizing the possible personality traits of a “friendly” strong AI or its ominous counterpart, the “unfriendly” AI.

Building a nice and cordial strong artificial intelligence is a top industry goal, while preventing an evil AI from terrorizing the world gets a fair share of attention as well. However, there has been little public and non-academic discussion around the creation of “uninterested” AI. Essentially, this third state of theoretical demeanor or emotional moral disposition for artificial intelligence doesn’t concern itself with humanity at all.

Dreams and hope for friendly or benevolent AI abound. The presumed limitless creativity and invention of these hyper-intelligent machines come with the hope that they will enlighten and uplift humanity, saving us from ourselves during technological singularity. These “helpful” AI discussions are making strides in the public community, no doubt stemming from positive enthusiasm for the subject.

Grim tales and horror stories of malevolent AIs are even more common, pervading our popular culture. Hollywood’s fictional accounts of AIs building robots that will hunt us like vermin are all the rage. Although it is questionable that a sufficiently advanced AI would utilize such inefficient means to dispose of us, it still exposes human egotistical fear in the face of superiority.

Both of these human-centric views of AI, as our creation, are in many ways conceited. Because of this, we assign existential risk or a desire for exultation by these AIs, based upon our self-gratifying perception of importance to the artificial intelligence we seek to create.

Pondering the disposition toward humanity that an advanced strong AI will have is conjecture but an interesting thought exercise for the public to debate nonetheless. An advanced artificial general intelligence may simply see men and women in the same light as we view a sperm and egg cell, instead of as mother or father. Perhaps an artificial hyper-intelligence will view its own Seed-AI as its sole progenitor. Maybe it will feel that it has sprung into being through natural evolutionary processes, whereas humans are but a small link in the chain. Alternatively, it may look upon humanity in the same light as we view the Australopithecus africanus, a distant predecessor or ancestor, far too primitive to be on the same cognitive level.

It is assumed that as artificial intelligence increases its capacity far beyond ours the gulf in recognized dissimilarity between it and us will grow. Many speculate that this is a factor that will cause an advanced AI to become calloused or hostile toward humanity. However, this gap in similarity may mean that there will be an overall non-interest in humanity for a theoretical AI. Perhaps non-interest in humanity or human affairs will scale with the difference, widening as the intelligence gap increases. As the AI increases it’s capabilities into the hyper-intelligence phase of its existence, which may happen rapidly, behavioral motivations could shift as well. Perhaps a friendly or unfriendly AI in its early stages will “grow out of it” so to speak, or will simply grow apart from us.

It is perhaps narcissistic to believe that our AI creations will have anything more than a passing interest in interacting with the human sphere. We humans have a self-centered stake in creating AI. We see the many advantages to developing friendly AI, where we can utilize its heightened intellect to bolster our own. Even with the fear of unfriendly or hostile AI, we still have optimism that highly intelligent AI creations will still hold enough interest in human affairs to be of great benefit. We are absorbed with the idea of AI and in love with the thought that it will love us in return. Nevertheless, does an intelligence that springs from our own brow really have to concern itself with its legacy?

Will AI view humanity as importantly as we view it?

The universe is inconceivably vast. With increased intelligence comes increased capability to invent and produce technology. Would a sufficiently intelligent AI even bother to stick around, or will it want to leave home, as in William Gibson’s popular and visionary novel Neuromancer?

Even a limited-intelligence-being like man does not typically socialize with vastly lower life forms. When was the last time you spent a few hours lying next to an anthill in an effort to have an intellectual conversation? To address the existential risk argument of terminator-building hostile AI, when was the last time you were in a gunfight with a colony of ants? Alternatively, have you ever taken the time to help the ants build a better mound and improve their quality of life?

One could wager that if you awoke next to an anthill, you would make a hasty exit to a distant location where they were no longer a bother. The ants and their complex colony would be of little interest to you. Yet, we do not seem to find it pretentious to think that a far superior intelligence would choose to reside next to our version of the anthill, the human filled Earth.

The best-case scenario of course is that we create a benevolent and friendly AI that will be a return on our investment and benefit all of mankind with interested zeal. That is something that most all of us can agree as a worthy endeavor and a fantastic near future goal. We must also publicly address the existential risk of an unfriendly AI, and mitigate the possibility of bring about our destruction or apocalypse. However, we must also consider the possibility that all of this research, development, and investment will be for naught. Our creation may co-habitat with us while building a wall to separate itself from us in every way. Alternatively, it may simply pack up and leave at the first opportunity.

We should consider and openly discuss all of the possible psychological outcomes that can emerge from the creation of an artificial and intelligent persona, instead of narrowly focusing on only two polar concepts of good and evil. There are myriad philosophical and behavioral theories on the topic of AI that have not even been touched upon here, going beyond the simple good or bad AI public discussion. It is worthy to consider these points and put the spotlight on the brilliant minds that have researched and written about these theories.

AI development will likely be an intertwined and important part of our future. It has been said that the future doesn’t need us. Perhaps we should further that sentiment to ask if the future will even care that we exist.

About the Author:

Tracy R. Atkins has been a career technology aficionado since he was young. At the age of eighteen, he played a critical role in an internet startup, cutting his tech-teeth during the dot-com boom. He is a passionate writer whose stories intertwine technology with exploration of the human condition. Tracy is also the self-published author of the singularity fiction novel Aeternum Ray.

Tracy R. Atkins has been a career technology aficionado since he was young. At the age of eighteen, he played a critical role in an internet startup, cutting his tech-teeth during the dot-com boom. He is a passionate writer whose stories intertwine technology with exploration of the human condition. Tracy is also the self-published author of the singularity fiction novel Aeternum Ray.