Turing à la Nikolić: From Thoughtless to Thoughtful

Charles Edward Culpepper / Op Ed

Posted on: November 7, 2015 / Last Modified: November 7, 2015

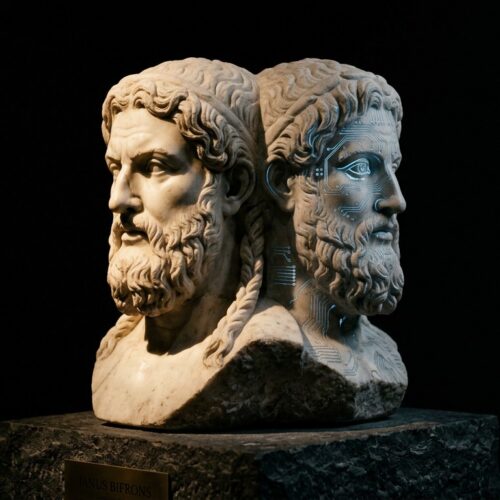

Alan Turing’s 1950 cult classic, Computing Machinery and Intelligence, (published 1950, Mind 49: 433-460) is divided into seven sections. The sixth section entitled, Contrary Views on the Main Question – “can computers think” – is a whimsical list of nine reasons why people would believe that computers cannot think.

But before he conveys the objections of others, he first confesses his own viewpoint, which subsequently has been quoted innumerably many times, for myriad reasons. The dry bomb he dropped was:

“The original question, “Can machines think?” I believe to be too meaningless to deserve discussion.”

This leaves a very bitter taste of the perfunctory in my mouth. His offhandedness in this case is intellectually contemptible. It smells like a witches brew of laziness, cowardice and lack of imagination – what I would call obligatory fatalism. A disgusting concession. But in due deference to his undeniably sublime genius and commensurate accomplishments, I put it down to a combination of ignorance, milieu and zeitgeist. But enough of my indulgent pontification. Let’s examine the list of peoples’ objections toward the possibility of machine thought.

Objections:

- Spiritual objection – thinking results from an immortal soul and machines cannot have a souls.

- Ostrich objection – the idea that machines could think is too dreadful to imagine.

- Gödelian objection – computers are discrete-state devices subject to Gödel Incompleteness.

- Dis-qualia-fied objection – thought requires a conscious, subjective experience not mere data.

- Limitations objection – thought requires high-emotions, creativity and personal perspective.

- Originality objection – machines can only do what they’re instructed to do.

- Neuromorphist objection – the human nervous system is not a discrete-state mechanism.

- Conformist objection – if people always follow rules they would be no different than machines.

- ESP objection – people may have extrasensory perception, but no machine does or can have it.

Turning’s Response to each objection:

#1: “I am unable to accept any of this.”

#2: “I do not think that this argument is sufficiently substantial to require refutation.

#3: “In short, then, there might be men cleverer than any given machine, but then again there might be other machines cleverer again, and so on.”

#4: “In short then, I think that most of those who support the argument from consciousness could be persuaded to abandon it rather than be forced into the solipsist position.”

#5: “The criticisms that we are considering here are often disguised forms of the argument from consciousness.”

#6: “The view that machines cannot give rise to surprises is due, I believe, to a fallacy to which philosophers and mathematicians are particularly subject. This is the assumption that as soon as a fact is presented to a mind all consequences of that fact spring into the mind simultaneously with it.”

#7: “It would not be possible for a digital computer to predict exactly what answers the differential analyser would give to a problem, but it would be quite capable of giving the right sort of answer.”

#8: “I would defy anyone to learn from these replies sufficient about the programme to be able to predict any replies to untried values.”

#9: “With ESP anything may happen.”

My Own Response to Each Objection:

#1: There is no such thing as a soul.

#2: Irrelevant.

#3: I do not believe a physical Turing Machine (purely discrete-state device) can ever produce thought. Although, it can behave intelligently, i.e., make correct choices. Alternately, I could say that Gödel’s Incompleteness Theorem demonstrates that no formal system – of which purely discrete-state machines are an example – can think, because thinking requires the embodiment of an included middle. Meaning that thinking necessarily demands that decisions not be limited to true or false criteria. Which is just another way of stating that Turing Machines can’t think. I believe ‘thought proper’, demands a management of abduction, deduction, induction and a-logical preference. Preference can be based on emotion or something analogous to emotion, like ethological imperatives (genomic default values, e.g., sucking reflex) – which a utility function is not. Utility functions arise from probabilistic logic. Utility functions are not a source of preference, they are what preference operates and compels. Loosely: preference is the driver, logic is the engine, utility is the car and adaptation is the destination.

#4: Without a falsifiable definition of consciousness – we don’t have one – the objection is undecidable.

#5: It is only a distinction without a difference of the argument from consciousness, i.e., objection #4.

#6: Although I believe computers have demonstrated originality in computational product. And I believe that originality in product is necessary for thinking, but it is not sufficient. Therefore, I disagree with Lady Ada Lovelace that computers are incapable of originality. However, their demonstration of originality is not sufficient to produce ‘real’ thought – although it is required.

#7: This argument is true for a purely digital device, but it is not true of all conceivable machines.

#8: This objection is effectively the statement that human thought is not reducible to “if-then” rules. Which is true, but neuromorphic machines, neural networks, cortical model machines, in fact, all machines capable of learning (modifying behavior appropriately, based on new data), are not, in any practical sense, reducible to “if-then” rules either. But, from a Practopoietic point-of-view, we know that machines can be built to think, provided they are meta-meta-cybernetic systems designed for adaptation, i.e., they are T3 systems which consist of three traverses.

#9: There is no such thing as Extrasensory Perception (ESP).

What I conclude from all this is that Practopoiesis is generally ignored by the AI theorists, researchers and technologists, despite the fact that they have yet to produce a generally accepted definition of a machine thought. They have not defined a hardware, or software, product describable by a small number of principles, or demonstrable by a sufficiently tested device.

You can paint a desert of intricate machinations, or a sea-surface of features that stay the same, regardless of how often they change, so that you have a monstrous entity beyond human reach, let alone grasp, but it only implies that you do not have sufficient understanding. When complexity is too high or funding too large or fame too precious, a project is “too big to fail” – and ironically, it is therefore likely to fail. Darwin reduced evolution to a handful of principles and the effect changed the field of science generally and biological science particularly.

The Wright brothers, by 1903, stripped the principle’s of flight down to its bare essence: wing size, shape, assortment and placement, engine power to weight ratio and most importantly, wing control via warping. In short, the Wright Flyer was a “critical ensemble” (a group of features that in aggregate produce an emergent, entirely new class of functionality). A mere 32 years after the Wright brothers’ critical ensemble, the 1935 McDonald Douglas DC-3 with 5 critical components: 1 Variable-pitch propeller, 2 Retractable landing gear, 3Lightweight molded body “monocque,” 4 Radial Air-cooled Engine, 5 Wing Flaps, was another leap in aeronautics by virtue of critical ensemble.

The DC-3 example, is from, Peter Senge (1947-Living), The Fifth Discipline: The Art and Practice of the Learning Organization (1990).

The Wright Flyer example is my own.

Another interesting example of the concept of critical ensemble can be found at: https://en.wikipedia.org/wiki/Critical_Art_Ensemble

Critical ensemble is a particular case of Gestalt. The Merriam-Webster dictionary defines gestalt as, “a structure, configuration, or pattern of physical, biological, or psychological phenomena so integrated as to constitute a functional unit with properties not derivable by summation of its parts”. The idea of a gestalt is essentially the same as the Greek concept of Holism https://en.wikipedia.org/wiki/Holism. I very vehemently believe, that the anti-reductionistic attribute of Holism is an absolutely necessary component of brain comprehension [no pun intended], because the brain is the whole body. This is not a philosophical position, rather it is a technical position, because the brain cannot create a mind unless it has a body, otherwise it is nothing more than a data library.

Researchers who try to approach artificial intelligence in the same manner as physicists – reductionism – will fail. Only my opinion, but I find that physicists are the worst theorists in AI research. Evidently Marvin Minsky agrees, in a comparison between physics, biology and AI, he cautions, “Here’s what I think is the problem, the brain doesn’t work in a simple way.” An anti-reductionist (productionistic) methodology is the only way forward. Unfortunately, men tend toward one-track-mindedness. Maybe women would make better AI researchers, because of their much larger corpus collosum they seem to handle networks of facts much better than men do.

And so, the natural question to ponder is how and when likely, the field of artificial intelligence will aggregate a mixture of a small number of features that result in a critical ensemble. And what will be the constituent mix of features, that will allow a synthetic mind, to take flight on wings of a man-made brain? It may well be that the combination of Knowm processors, Numenta Hierarchical Cortical Memory utilizing sparse distributed representation and three Practopoietic traverses, is sufficient for, the AGI equivalent of the Wright Flyer. I think, maybe yes.

About the Author:

Charles Edward Culpepper, III is a Poet, Philosopher and Futurist who regards employment as a necessary nuisance…

Charles Edward Culpepper, III is a Poet, Philosopher and Futurist who regards employment as a necessary nuisance…