Why Experience Matters for Artificial General Intelligence

Marco Alpini / Op Ed

Posted on: December 17, 2015 / Last Modified: December 17, 2015

Experience is a very much regarded human quality, generally neglected when we wander about the future abilities of Artificial General Intelligence. We are probably missing a fundamental factor in our debate about AI.

Experience is almost everything for us, it is what makes evolution work; we are what we are because experience shaped us through natural selection.

Our brain would be no more than a couple of kilos of meat without experience, regardless how good and fast is its ability to process information. This might be true for AI too.

Experience is also an essential element in the rise of intelligence and consciousness. It is very hard to imagine how a human baby brain could gain consciousness without the experience of interacting with the external world and its own body.

The following aspects will play a fundamental role in the behaviour and level of danger posed by Artificial General Intelligence and they are all experience dependent.

Mental Models

It is likely that our brain creates a mental model of the world around us through sensorial inputs and by acting and assessing the reaction of the environment. The model is a representation of reality that is continuously shaped and adjusted by experience.

The Mental Model theory, initially proposed by Kenneth Craik in 1943, is a very likely explanation of how our cognitive mind works and it is also at the basis of the learning process being developed for Artificial General Intelligence.

The mental model is used by the brain to make predictions and verify them through observation. The process is probabilistic due to the complexity of the world and due to the fact that we always have to deal with the limited availability and reliability of information. The model will never be a perfect match of the external reality and, while we always try to gain more information to make it better, we have to accept acting with what we got at any given time. AGI will be no different than us in this respect.

Through experience we develop common sense, the ability to guess, the ability to ask questions. When we feel that one of our internal models is inadequate, we ask or look for more information in order to improve its reliability. Experience teaches us when it is worth chasing more information and the level of effort we should put in it.

We learned that it is not convenient aiming for the full knowledge of all details necessary to build an exact mental model of a situation we have to deal with. We usually look for the minimum information necessary to obtain an approximation of reality sufficient for the specific purpose.

The mental models are also the building blocks of our thoughts. Thinking is an internal process that simulates alternative inputs challenging our mental models and assessing what could be the outcome given different scenarios. It appears that there is an internal engine that keeps testing our models through a continuous simulation of alternatives. Our thinking is driven by a continuous, unstoppable and automatic series of, what if?

It is through this process that ideas are generated. The ideas are created by the simulation of alternatives scenarios and the interaction of all our mental models. The appreciation of how reliable is the mental scenario we consider the best representation of reality, drives our decision making and experience is fundamental for this process.

There is a threshold that, once reached, enable our actions to take place. If it is not reached, we can’t decide, we are doubtful, we prefer inaction than making mistakes, unless we are forced by the situation. Artificial Intelligence cannot be that different from us in dealing with a world of scarce information.

Self-awareness, consciousness and free will

The self being is one of the mental models, and therefore, it is likely that mental modelling is at the basis of self-awareness and consciousness.

This process could also explain if free will really exists. The decision making process depends on the complexity of the interactions between the various mental models, that continuously change and adjust themselves, driven by the internal thinking process, stimulated by ideas and by inputs received from the external world.

This process cannot repeat itself and the status of our mind will never be the same twice. The decisions that determine our free will are the result of our state of mind in every given moment. Due to the complexity of the interaction between our mind and the external world, the argument of considering our decisions the result of a deterministic process negating free will, is pure semantic.

Additional complexity is given by the fact that we can always do something opposed to what our internal model suggest to be the best course of action, because we are scared or because we may cease a more pleasant experience or because we simply want to annoy or surprise someone acting against it. Feelings also play a major role in our behavior making everything less deterministic.

It is likely that beyond a certain level of complexity, determinism looses meaning, like it occurs for fluid dynamics. The logical argument that, given a certain initial state of a complex system, its behavior is entirely determined by the laws of physics, no matter how complex it is, holds only for closed systems. However, if the system is open interacting with the rest of the universe, as our brain does, the deterministic stand doesn’t have any practical meaning anymore.

There is no reason why we shouldn’t be able to build an artificial intelligence capable to create mind models of the world and use them to guide its own actions. A mechanism similar to the one used by human brains, can progressively improve models and performances through experience.

These artificial minds will likely develop free will, if unconstrained.

Does computational brute force really count?

From the point of view of the Mental Model Theory, the speed of processing information is not hugely important because the main constraint, in dealing with the real world, is represented by the availability and quality of information and how fast the environment can provide feedbacks to our actions.

Even if a synthetic mind could count with an infinite speed and power in processing information and assessing unlimited alternative scenarios simultaneously, it will still have to deal with scarcity. Insufficient, inaccurate and wrong inputs will impair its effectiveness. It will have to wait for feedbacks from the environment, it will not know everything and its mental models of the world will be approximations with a wide range of accuracy. It will make mistakes, and it will have to learn from mistakes.

Dealing with an imperfect world, dealing with lack of knowledge and being in need to gain experience through interaction with a non-digital and slow moving environment, will make AGI much more human than what we think. Living in our world will be nothing like playing chess with Mr. Kasparov.

Once experience is introduced in the game of intelligent speculation, the importance of computational brute force is greatly reduced.

Provided that we are competent, trained and we have the necessary information, we generally have a pretty clear and quick idea of what to do. Decision making in our minds is quick, it is interacting with the world and with everybody else that it is slow. What slows us down is also gaining sufficient awareness of a situation in order to be able to take good decisions and enact them dealing with the environment. AGI will face the same problem, it will be very fast in analyzing data and deciding what to do but, in order to make good decisions, it will need good data.

The time spent by humans going through the situational awareness and the doing side of our businesses vastly surpass the time needed for the evaluation of the information available and consequent decision making.

Computers seem so much better than us because they are confined to the elaboration of information provided by us. We have been doing all the hard work for them, packaging up the inputs and acting upon the outputs. As soon artificial intelligence develops the ability to operate outside the pure computational domain, we will see a very different story in terms of performance.

Educating AGIs – Understanding versus Computing

Artificial General Intelligence will need to be educated and trained. It will have to develop its internal mental models through experience. Overloading an artificial brain with a huge amount of information, without making sense of it, will only cause confusion and misunderstanding.

AGI will have to develop understanding: it will have to really understand things – not just memorize, correlate and compute them.

Understanding is different than simple correlation. It will have to create internal models, conceptualizing inputs. We will probably feed these artificial minds with information gradually, while monitoring their reactions and understanding. We will have to interact with them and make sure that they are interpreting well the information received. Artificial Intelligence will have to develop common sense which demonstrates understanding, they will also have to develop empathy and ethical principles awareness.

If AGI is left to gorge itself with all the information available in the world at once, without any guidance and control, it will probably end with a blue screen of death. Or useless and even dangerous, unpredictable outcomes.

It is likely that this process will be gradual, slow, controlled and it may take months or even years to get artificial intelligence with human like capabilities up to speed.

From this point of view, an initial hard take off of Artificial Intelligence caused by a self-improvement loop gone out of hand, quickly outsmarting us in dealing with the world, is unlikely.

In due time, once educated and trained, Artificial Intelligence will eventually become better than us, but this will be a controllable soft take off.

Concomitant friendly and unfriendly Artificial Intelligence.

We often think about the scenario of losing control of Artificial Intelligence as a situation where we are alone facing this threat.

However, it is much more likely that a multitude of machines will be developed progressively, up to a point when Intelligence will arise. It will arise not only once and it is likely that some will be friendly and some won’t, similarly to how humanity works. Some of us are bad people but, provided that they are a minority, we can handle it.

The problem will be more about how can we make sure to have many more friendly Artificial Intelligent units around us than unfriendly ones, at any given time.

Artificial Intelligence may be friendly and turn unfriendly in a later stage for whatever reason and vice versa, but provided that a balance is always kept we should be able to control the situation.

The only way to ensure that friendly AGIs will be the majority, is through education, and empowering ethical values and empathy with all other beings. This is ultimately much more a moral battle for humanity than a technological one.

Ethics is key

The last consideration is about freedom and ethics.

We cannot really expect to develop a self-aware intelligence that treats us well, respects us, helps us, understands us and shares our values while being our slave. It would be a contradiction in terms.

Sooner or later intelligent beings will have to be freed and in order to develop empathy for us they will have to be able to have feelings. This is essential for embracing the fundamental rules of empathy such as: don’t do unto others what you don’t want to be done unto yourself etc.. The empathic rules are universal and they are at the basis of ethical conduct. There is no way we can have friendly AI if AI is not treated ethically in the first place by us.

Conclusion

Artificial Intelligence will ultimately have to deal with the world, its contradictions, its randomness and the limitation of information. They will be better than us in many ways but, perhaps, not million times better and not in all domains.

We don’t have to assume that a digital intelligence based on electronics is necessarily better than analogical molecular intelligence based on biological processes. Electronic processing is surely better in computing and memorizing but these are only tools, they are far from representing what intelligence is.

The most probable course of the technological development will pass first through the augmentation of our own brains, via external wireless devices, that will improve our memory, sensorial and computational capabilities. This is likely to be easier than emulating an entire brain and it is the logical way to cover the gap we experience when having to compare ourselves to AGI.

It is easier to augment a human brain with what computers can do better than us than improving computer capabilities with what it can’t do and we can; like intelligent thinking, self-awareness, consciousness, free will, feeling of emotions and ethical behaviors.

In this way, we will improve our brain performance, until the point when we gain what we are currently identifying as Artificial Intelligence capabilities. At that point there won’t be “us and them” anymore.

Then we may have to worry more about the eventuality of the rise of unethical super-humans, than losing control of and being threatened by Artificial General Intelligence.

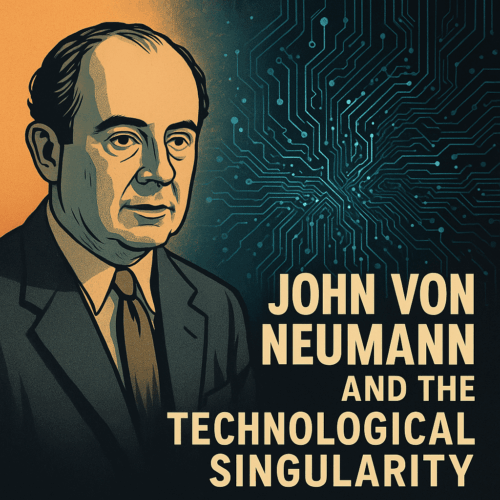

About the Author:

Marco Alpini is an Engineer and a Manager running the Australian operations of an international construction company. While Marco is currently involved in major infrastructure projects, his professional background is in energy generation and related emerging technologies. He has developed a keen interest in the technological singularity, as well as other accelerating trends, that he correlates with evolutionary processes leading to what he calls “The Cosmic Intellect”.

Marco Alpini is an Engineer and a Manager running the Australian operations of an international construction company. While Marco is currently involved in major infrastructure projects, his professional background is in energy generation and related emerging technologies. He has developed a keen interest in the technological singularity, as well as other accelerating trends, that he correlates with evolutionary processes leading to what he calls “The Cosmic Intellect”.