Why We Need an Ethical Enlightenment in AI Relations

Daniel Faggella / Op Ed

Posted on: October 22, 2015 / Last Modified: August 29, 2022

While many may be intrigued by the idea, how many of us actually care about robots – in the relating sense of the word? Dr. David Gunkel believes we need to take a closer and more objective view of our moral decision making, which in the past has been more capricious than empirical. He believes that our moral-based decisions have differed less on hard rationality and more on culture, tradition, and individual choice.

While many may be intrigued by the idea, how many of us actually care about robots – in the relating sense of the word? Dr. David Gunkel believes we need to take a closer and more objective view of our moral decision making, which in the past has been more capricious than empirical. He believes that our moral-based decisions have differed less on hard rationality and more on culture, tradition, and individual choice.

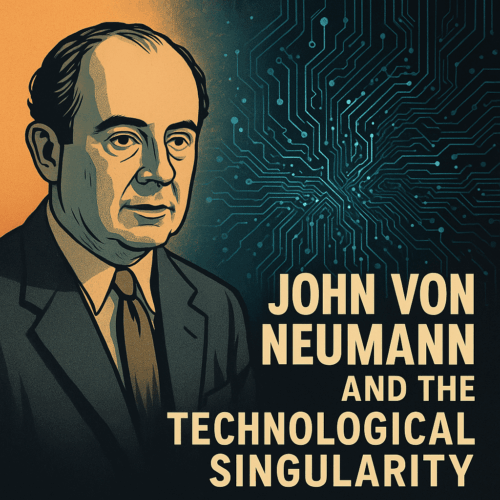

If (or when) robots end up in our homes, taking care of people and helping to manage daily chores, we’ll inevitably be in a position where certain decisions can be made to include or exclude those robots from certain rights and privileges. In this new frontier or ethical thought, it’s useful to think of past examples of rights and privileges being extended to entities other than people.

In the last few years, the U.S. Supreme Court has strengthened its recognition of corporations as individuals. The Indian government recognized dolphins as “non-human persons” in 2013, effectively putting a ban on cetacean captivity. These examples of past evolutions in society’s moral compass are crucial as we engineer various robotic devices that will become a part of the ‘normal’ societal makeup in the coming decades.

Part of what we need to do is Nietzschian in nature. That is, we should interrogate the values behind our values, and try to get people to ask the tough, sometimes abstract questions i.e. “That’s been our history, but can we do better?” David believes that we should not fool ourselves into thinking that we are doing something that we are, in actuality, not doing – at least with integrity.

When it comes to our AI-driven devices, Gunkel wants us to look seriously at the way we situate these objects in our world. What do we do in our relationship with these devices? Granted, we don’t have much of a record at this point in time. “Some people have difficulties getting rid of their Smartphone, they become kind of attached to it in an odd way”, remarks Gunkel. There is certainly no rulebook that tells us how to treat our smart devices.

He acknowledges that some might wave off the idea of any sort of AI ethics until one has been created that has an intelligence, or even an awareness, that is close to that of a human’s. “I don’t think it’s a question of level of awareness of a machine – it’s not about the machine – what will matter is how we (humans) relate to AI,” says David. “Sentience may be a red herring, it may be the way that we excuse thinking about this problem, to say that it’s not our problem now, we’ll just kick it down the road.”

Without any set rules, will more advanced social robots be slaves? Will we treat them like companions? PARO, an interactive robot in the form of a seal, is now being used in hospitals and other care facilities around the world. There is already evidence that many elderly treat them like pets; what happens when one of these seals has to be replaced or taken away? Again, there’s not a record of activity to set a precedent.

We might draw some relation here to children and their relationship to their stuffed animals. Any parent knows that you can’t just replace a stuffed animal with one that looks like the old stuffed animal – the child will cry and ask for the old version, no matter how attractive or expensive the new toy. “This is a real biological way in which we connect with not only people but also objects”, says Gunkel. It may be more challenging to anticipate how people will react to future robotic entities than we realize.

Kant presented a tangent argument that we can apply to this train of thought, explains David. The famous philosopher didn’t love animals, but he talked about not kicking a dog because it diminishes the moral sphere in which we live, within our own conscience and the greater moral community. With this concept in mind, what basic foundational policy might we set in place i.e. what are some ground rules to help direct us in our relation to AI as we move forward?

These will certainly evolve, like all man-made laws and ethical conceptions, but Gunkel suggests some key questions that we should ask now to come up with these ground rules for the nearer-term future:

- What is it we are designing?

We need to be very careful about what and how we design AI-driven machines. Engineering is too often solely a results-generated opportunity, without enough time spent on thinking about the ethical outcomes. We are currently facing the very real and dangerous predicament of whether to continue down the road of designing autonomous weapons.

- After we’ve created such entities, what do we do with them? How do we situate them in our world?

- What happens in terms of law and policy?

Court decisions have been made that set up early precedents for how we treat entities that are not human. It seems plausible to make the same argument for autonomous AI. For example, a corporation isn’t sentient, but it’s made up of sentient people, and considered to have rights akin to a person’s.

Whether or not you agree with his notion, setting a precedent for our receptivity to the legal and moral aspects of future robotic entities; considering why we are creating such entities; and thinking through how they should be treated in return, is a necessary venture for citizens and politicians to help avoid future conflicts and hedge catastrophe.

About the Author:

Dan Faggella is a graduate of UPENN’s Master of Applied Positive Psychology program, as well as a national martial arts champion. His work focuses heavily on emerging technology and startup businesses (TechEmergence.com), and the pressing issues and opportunities with augmenting consciousness. His articles and interviews with philosophers / experts can be found at SentientPotential.com

Dan Faggella is a graduate of UPENN’s Master of Applied Positive Psychology program, as well as a national martial arts champion. His work focuses heavily on emerging technology and startup businesses (TechEmergence.com), and the pressing issues and opportunities with augmenting consciousness. His articles and interviews with philosophers / experts can be found at SentientPotential.com