Magic, Manic and Monstrous: How Facebook is Programming Us

Socrates / Op Ed

Posted on: March 29, 2018 / Last Modified: November 2, 2018

What if you take a bunch of super-smart private school and Ivy League Ph.D. graduates from a diversity of fields spanning data and behavioral science, marketing and psychology. Then enhance the team with lateral thinking from a maverick pink-hair high-school-dropout and endow them with a treasure trove of 5,000 data points for 90 million people to work with. Get a computer-scientist-turned-hedge-fund-billionaire to fund them as an angel investor and embrace the Facebook ethos of move fast and break things.

What if you take a bunch of super-smart private school and Ivy League Ph.D. graduates from a diversity of fields spanning data and behavioral science, marketing and psychology. Then enhance the team with lateral thinking from a maverick pink-hair high-school-dropout and endow them with a treasure trove of 5,000 data points for 90 million people to work with. Get a computer-scientist-turned-hedge-fund-billionaire to fund them as an angel investor and embrace the Facebook ethos of move fast and break things.

What do you get?

Does discouraging opposition voters to participate in elections in Nigeria, stirring and exploiting ethnic tensions in Latvia, manufacturing “graffiti youth campaign” in Trinidad and Tobago, manipulating Brexit and an American Presidential Election sound about right?

Technology is not enough. Neither is expensive education, advanced science degrees or personal intelligence. If you don’t believe me just look at Enron, Worldcom, Tyco, Freddie Mac, AIG, Lehman Brothers or Arthur Andersen who were in many ways better endowed with both human and other resources than the SCL Group or its offspring Cambridge Analytica[CA]. Yet, in the end, their intelligence, money, power, and technology helped them to do infinitely more damage than good.

Story and Weaponizing Narrative

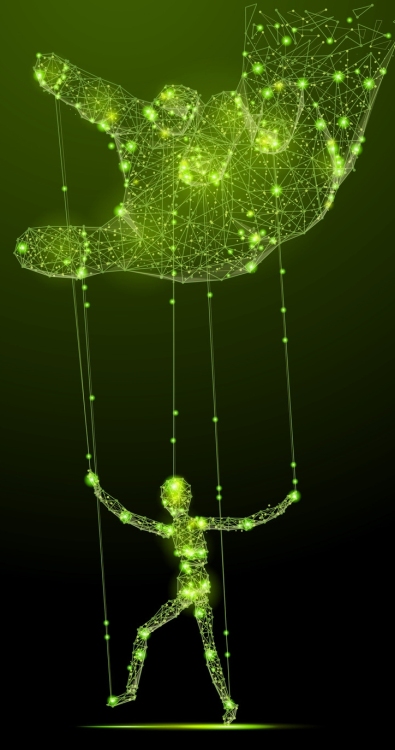

Story is the most powerful tool in the world. And military and political leaders have known and used narrative as a weapon for millennia. Because if one can control and manipulate your story one can control and manipulate you.

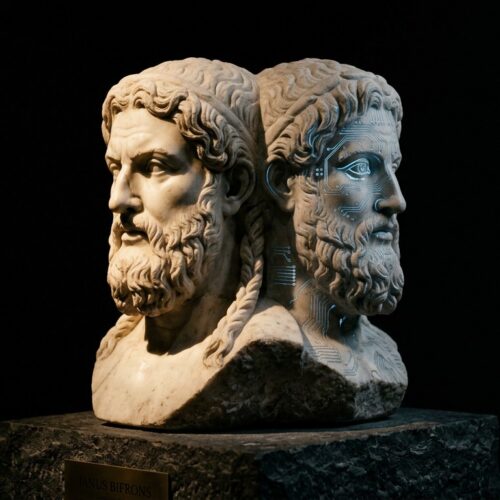

This is not a new phenomenon. But it is only recently that advances in science and technology have allowed us to scale weaponizing narrative globally with previously unimagined effects and devastating efficiency.

Here is how it works:

First, you “harvest” data. Then you analyze it for “vulnerabilities.” Finally, you exploit the identified vulnerabilities to change people’s behavior. This could mean: changing if and what people buy; if and how people resist an oppressive government or a foreign invasion; if and how people vote etc.

So, at least in theory, if you reach people via a medium they trust, and tell them the right story, in the right voice, exploiting their individual vulnerabilities, and you do that enough times, then eventually you can make them believe, buy and do whatever you want.

That is what weaponizing narrative is all about, the truth notwithstanding. And now we know it is not just a theory. Because it is what the SCL Group, and its progeny Cambridge Analytica and AggregateIQ [AIQ], attempted to do during Brexit and the last presidential elections. And the evidence suggests they likely succeeded because a “shift” of only 2 % of targeted voters would have been enough to alter the final outcome.

Let’s go a little more in-depth into each step of the process:

Monetization and Data Harvesting

Data is the new oil because everything runs on it. So you need data. Accurate data. And a lot of it – the more the better because, naturally, data is never enough. To get data you must go where the data is – mostly Facebook, but also Google, Amazon, Netflix, YouTube, other social media platforms, Internet Service Providers, Cable TV, security camera feeds, credit card transactions, phone company, library or medical records – anything and everything that is or can be tracked.

Data is the new oil because everything runs on it. So you need data. Accurate data. And a lot of it – the more the better because, naturally, data is never enough. To get data you must go where the data is – mostly Facebook, but also Google, Amazon, Netflix, YouTube, other social media platforms, Internet Service Providers, Cable TV, security camera feeds, credit card transactions, phone company, library or medical records – anything and everything that is or can be tracked.

Like most other companies, Facebook is motivated by their primary directive of making money for their shareholders. But, unlike most other companies, Facebook has a unique problem – i.e. more data than anyone else while lacking a major alternative source of revenue. So, Facebook must keep finding ingenious ways to collect more and more data about its users as well as new ways to convert it into revenue. Thus the imperative for monetizing becomes the imperative for surveillance and, eventually, the enabler of weaponizing. In essence, to be as financially successful as possible, Facebook had to become a massive surveillance organization. And so it has.

In other words, the problem with Facebook, but also with Google and others, is not what COO Sheryl Sandberg called a few “bad actors.” The real problem is that their entire business model revolves around acquiring and using personal data. It then sells the data to clients who want to target specific users with a specific message. So it is still worth highlighting that we are Facebook’s product, not its clients. The actual clients are companies such as the SCL Group and Cambridge Analytica who pay millions of dollars to Facebook. Therefore, the more users and the more data Facebook can gather, the more money it can make. It’s a monetization via surveillance business model. Ditto for Google and, to a lesser extent, for Amazon, Netflix, and most other internet giants.

PsyOps and Psychographics

Psychological Operations [PsyOps] is what the military means when talking about “winning hearts and minds” in Afghanistan, Iraq or anywhere else. In essence, PsyOps deploy psychological tools with the goal of changing people’s thoughts and behavior. [You can also call it social-engineering or social-programming if you want.]

Psychographics is a methodology for identifying and understanding of personality traits and features. You can think of it simply as manipulation of people’s psyche to radically affect how one thinks, feels and acts about any given subject. And the reason why this is important is that personality drives behavior and behavior is how one does anything – be it buying, voting, fighting and so on.

For example, after Cambridge researcher Alexander Kogan run a survey tricking Facebook users to give him all their data, as well as all their friends’ data, Cambridge Analytica acquired details for 87 million Facebook users, including 61 million Americans. The company then used that treasure-trove of private information, which they acquired both illegally and for free, to create a model that can predict the personality of every single American.

The model used is the so-called OCEAN model from experimental psychology where the abbreviation stands for:

Openness – how open you are to new experiences

Conscientiousness – whether you prefer order, planning, and habits in your life

Extraversion – how social you are

Agreeableness – whether you put other people’s needs and society ahead of yourself

Neuroticism – a measurement of how much you tend to worry about things

While it seems simple, it is worth noting that the model is not merely an academic exercise or educated guessing machine. Rather, it is a scientifically rigorous model that allows them to target, predict, test and fine-tune the message they send to each individual voter or consumer, based on that person’s unique vulnerabilities, and evoke the desired outcome or behavior. [Again, you can call it social engineering, programming or good-old-fashioned brain-washing, if you prefer.]

Micro-Targeting

Once you have figured out the psychological profile of each voter then you can proceed with a micro-targeted message that is tailored to that specific person with the goal of changing their behavior.

What this means is that instead of shouting in the town square you now can go whispering in everyone’s ear, after learning everything important about each person. And the facts or the truth are irrelevant because at this point it is all about pressing people’s buttons by exploiting their vulnerabilities in order to make them do your bidding. So you whisper one thing to this voter and an entirely different thing to another one.

For example, you can make Bernie supporters depressed to the point where they don’t show up to vote at all. You can push moderates to go extreme by manipulating their individual fears and emotions with fake news and other targeted content. You can exploit cultural, religious or ethnic divisions or create new ones. It doesn’t matter what you do, and it doesn’t matter if it is true or not, as long as it serves your purpose. As Cambridge Analytica managing director Mark Turnbull explains:

“It is no good fighting an election campaign on the facts because actually, it’s all about emotion. The big mistake political parties make is that they attempt to win the argument rather than locating the emotional center of the issue – the concern, and speaking directly to that.”

In other words, it is all about manipulating people’s emotions regardless of the actual truth or facts. So Cambridge Analytica is offering their clients a scientific finely-tuned full-service personalized propaganda machine aimed at doing just that. And they are happy to lease it to anyone able to pay for it, including corrupt and authoritarian regimes across the world. But the SCL Group, CA and AIQ are just the visible tips of the iceberg. Hundreds of other companies and organizations – like Palantir or the Russian Federal Security Service, are either developing or have already developed similar capacity.

Magic, Manic and Monstrous: How Facebook is Programming Us

As well-known futurist Gerd Leonhard has noted, Facebook started out as pure magic. Then it got us so addicted that it became manic. And, finally, it is revealing itself to be monstrous. But if you think that this was some kind of random outcome unforeseen by its naive but idealistic founders, then, you’d be wrong. As former Facebook president Sean Parker admitted publicly:

“The thought process that went into building these applications, Facebook being the first of them to really understand it, was all about: ‘How do we consume as much of your time and conscious attention as possible?'”

“And that means that we need to sort of give you a little dopamine hit every once in a while, because someone liked or commented on a photo or a post or whatever. And that’s going to get you to contribute more content, and that’s going to get you … more likes and comments.”

“It’s a social-validation feedback loop … exactly the kind of thing that a hacker like myself would come up with, because you’re exploiting a vulnerability in human psychology.”

“The inventors, creators — it’s me, it’s Mark [Zuckerberg], it’s Kevin Systrom on Instagram, it’s all of these people — understood this consciously. And we did it anyway.”

In other words, Facebook set out to program an addictive platform exploiting the vulnerabilities in human psychology from the very beginning. And now Facebook is literally programming us.

In other words, Facebook set out to program an addictive platform exploiting the vulnerabilities in human psychology from the very beginning. And now Facebook is literally programming us.

It is programming us to log in, to check in, to click, to tag, to share, to update, to stay updated, to go live, to like, to dislike, to get angry, to be happy, to get depressed, to friend, to start a group, to unfriend, to give up on privacy and to literally never leave the platform. It has programmed us to depend on and trust it so much that we can’t even imagine life without Facebook. So much so that the question “Do you have Facebook?” is now obsolete. The real question is “Does Facebook have you?” And, the answer, of course, is “Yes, it does.” [Regardless of whether you actually have an account or not. So I’m not convinced that the #DeleteFacebook campaign is the right approach in the long run, though I hope there are at least some short-term benefits from it.]

Privacy, Free Will, and Democracy

The reality is that Facebook has become the biggest story-telling platform. And, on the one hand, it has told us all a story – the story of what Facebook is. [Or, rather what Facebook pretends to be.] And we believed it. On the other hand, it is so successful, because it has also become a platform for us all to chronicle and tell our own stories. So, one way or another, the story of most people and most companies eventually ends up there.

But, it is Facebook’s story-telling platform, not ours. They control the medium, they control the message, they control the stories that we see, and more-and-more they control us. As Plato pointed out: “Those who tell the stories rule society.” So, given how successful Facebook is in telling stories and programming us to listen and respond, there should be no surprise that there is a seemingly infinite number of people and organizations who want to get on the Facebook bandwagon and program their own messages into our psyche. Thus, what is personal data for us is money in Facebook’s bank account. [Which is why Roger McNamee, one of the first Facebook investors, noted that “the incentives are fundamentally stacked against user privacy.”]

So when a mercenary contractor with a background in military intelligence and psychological warfare in service of multiple authoritarian regimes looks at Facebook they see their wet-dream come true. Because, at least to them, Facebook doesn’t stand for social. It stands for surveillance. And thus the imperative to monetize becomes an enabler to weaponize. Of course, data harvesting, psychographics, and micro-targeting is not simply Cambridge Analytica’s business model. It is first and foremost Facebook’s business model. Only, at least in the past, Facebook used it mostly to show us ads that sell us things. But the genie is out of the bottle and election campaigns will never be the same.

The next question, though, is: “Why stop at elections?”

AI will soon start telling us not only how to vote but also where to go to school, what sports we should play, who should be our friends, where we should go eat, what books we should read, what movies we should watch, whom we should marry and what to do with our lives. Do you think that Facebook isn’t doing everything they can to make sure it is their AI that keeps whispering into our ear? [Of course, Google is no stranger to such ambitions with Eric Schmidt sharing that Google is not a search engine but rather an AI company aiming to know what you want to know, even before you want to know it.]

And we are finally getting to the core problem:

Can either free will or democracy survive in a world where lack of personal privacy, big data, AI and a diversity of actors from ill-intentioned foreign governments to trillion dollar corporations have turned brain-washing into an exact science?

As Cambridge Analytica whistleblower Christopher Wylie noted:

“We risk fragmenting society in a way where we don’t have any more shared experiences and we don’t have any more shared understanding. If we don’t have any more shared understanding how can be a functional society?”

Of course, the Silicon Valley lobby has compelled the US government to hands-off laissez-faire attitude basically allowing companies to do whatever they want with our data. And we are only slowly becoming aware of the consequences. But this is just the beginning. Things will likely get much worse before they get any better at all.

Facebook’s original motto was to “move fast and break things.” And it has done both of those remarkably well: it has moved fast to reach more than 2 billion people; and, it has also broken major things such as democracy – be it in the case of Brexit or Trump or others. Furthermore, Facebook’s longest standing executive Chamath Palihapitiya has said that social media, in general, and Facebook, in particular, is ripping apart the fabric of how society works. [And for what?! To make money by exploiting vulnerabilities in our psychology and monetizing our personal data.]

We are only slowly starting to get aware of the costs and dangers associated with Facebook but, apparently, it is not likely to make the company change its core business model and risk diminished growth. As demonstrated by the June 18, 2016 Ugly Truth memo by Andrew Bosworth – co-inventor of Facebook’s News Feed and currently in charge of virtual reality efforts, “anything the company [Facebook] did to grow” was “de facto” good and justified, including aiding bulling or terrorism and thereby being a cause for death.

***

It’s a brave new world out there and Big Brother could not have dreamed to have the tools for manipulation and control that Facebook has today. Facebook started as pure magic, got manic and is now at least in danger of becoming totally monstrous. What is worse is that, like Facebook, it is possible the entire business model of the entire internet may well be fundamentally broken. And that is why technology is the future of politics. The only question is will we try to sort out our social problems in a democratic way where all people retain control over their own free will. Or will we all be simply programmed to do somebody else’s bidding, be it Facebook, be it Google, be it Amazon, Ali Baba or Baidu.

The Cambridge Analytica exposé: everything you need to know in 3 min

Cambridge Analytica claims to use data ‘to change audience behaviour’. But now a whistleblower, Christopher Wylie, has come forward to expose the company’s practices. Wylie describes how its CEO, Alexander Nix, attracted support from the then Breitbart editor, Steve Bannon, and investment from the billionaire Robert Mercer before obtaining help from the Cambridge professor Aleksandr Kogan to harvest tens of millions of Facebook profiles

Cambridge Analytica whistleblower: ‘We spent $1m harvesting millions of Facebook profiles’

Christopher Wylie, who worked for data firm Cambridge Analytica, reveals how personal information was taken without authorisation in early 2014 to build a system that could profile individual US voters in order to target them with personalised political advertisements. At the time the company was owned by the hedge fund billionaire Robert Mercer, and headed at the time by Donald Trump’s key adviser, Steve Bannon. Its CEO is Alexander Nix

Cambridge Analytica whistleblower Christopher Wylie speaks out | Extended cut

Cambridge Analytica reportedly mined tens of millions of Facebook profiles and that may have helped influence the U.S. election and the U.K. Brexit vote. Now Canadian whistleblower Christopher Wylie is speaking out about what he knows.

Silicon Valley broke California and it is currently in the process of breaking the USA. Only question that remains is are we going to let it break the world?

Cambridge Analytica: Undercover Secrets of Trump’s Data Firm

An investigation by Channel 4 News has revealed how Cambridge Analytica claims it ran ‘all’ of President Trump’s digital campaign – and may have broken election law. Executives were secretly filmed saying they leave ‘no paper trail’.

Cambridge Analytica: Whistleblower reveals data grab of 50 million Facebook profiles

The British data firm described as “pivotal” in Donald Trump’s presidential victory was behind a ‘data grab’ of more than 50 million Facebook profiles, a whistleblower has revealed to Channel 4 News.

In an exclusive television interview, Chris Wylie, former Research Director at Cambridge Analytica tells all.

Did Cambridge Analytica play a role in the EU referendum? – BBC Newsnight

Did the data analytics company Cambridge Analytica play a role in the UK’s EU referendum? BBC Newsnight’s Gabriel Gatehouse reports.

Stephen Analyticizes Cambridge Analytica

Data firm Cambridge Analytica exploited Facebook to gather information of millions of potential voters. Oh, and prostitutes!

Related Articles

-

-

- The web can be weaponised – and we can’t count on big tech to stop it

- The Cambridge Analytica Files:

‘I made Steve Bannon’s psychological warfare tool’ - Edward Snowden: Facebook is a surveillance company rebranded as ‘social media’

- Why the politics of the future is technology and technology is the future of politics

- WEF 2018: DAVOS, DATA, PALANTIR AND THE FUTURE OF THE INTERNET

- Is Google Evil?

- We’re Building a Dystopia Just to Make People Click on Ads

- The Internet Apologizes …

- Scroogled By Cory Doctorow (The Day Google Became Evil)

-