VR vision primer on Computer Graphics in general and Virtual Reality in particular

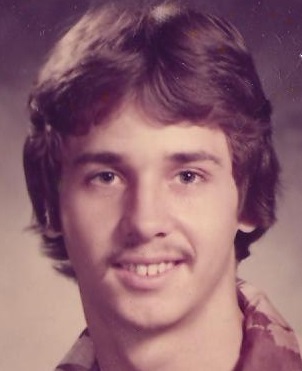

Charles Edward Culpepper / Op Ed

Posted on: October 21, 2015 / Last Modified: October 21, 2015

Many people wonder why virtual reality always either falls short of appearing ‘real’; or it seems ‘real enough’ yet, with extended periods of viewing, the experience can be literally sickening. The following is a VR vision primer to introduce the primary issues involved with realistic display of moving images. Each of the problems would require very expensive improvements in technology from what now exists. That is not to say that the problems will not be completely resolved. They likely will be. But progress will come sometimes gradually, or in bursts and pauses.

Many people wonder why virtual reality always either falls short of appearing ‘real’; or it seems ‘real enough’ yet, with extended periods of viewing, the experience can be literally sickening. The following is a VR vision primer to introduce the primary issues involved with realistic display of moving images. Each of the problems would require very expensive improvements in technology from what now exists. That is not to say that the problems will not be completely resolved. They likely will be. But progress will come sometimes gradually, or in bursts and pauses.

There are, of course, many other attributes of VR that are different than visual qualities, e.g., haptic systems (for touch), olfactory systems (for smell), auditory systems – with true 3D sound (for hearing), gustatory systems (for taste). Each of the systems have their own issues. And there is always the overarching issue of synethesia. Each of which there will have their own VR systems that will combine with each other in a synthesis of sensory experience.

There also has to be a way to allow unlimited movement – in the form of walking, running, flying, swimming, etc – that allows a small room to emulate an essentially unlimited space. I think this would require a full datasuit that can emulate temperature, moistness, texture, etc, a fly system for body support and a gimbal system to allow for unlimited orientation in 3D space. I have a design in mind, but that is beyond the scope of this article.

Issue 1: Pixelation

Images on a page or on a computer screen are comprised of little dots or squares. These combine in the eye and the mind to form what looks like an unbroken picture. We are all loosely aware that when we watch a movie that the images are not real objects or subjects, but are only a map of colors and brightness on a two dimensional surface. The dots, or squares are typically referred to as pixels. As you reduce the number of pixels the image becomes progressively less detailed, until in the extreme case there is nothing recognizable but dots or squares.

But beyond a certain number of pixels (resolution) neither the eye or the mind can notice any improvement. This varies from person to person, but at about 7 or 8 million pixels per square inch there is a definitive decrease in the increase of clarity – depending on conditions (movement, lighting, state of mind, etc). The sweet spot – the spot where nearly everyone is happy with the clarity is about 7 million pixels per square inch. The human eye is about 576 megapixels, but only about 7 megapixels matter. For additional explanation and detail see these links:

https://www.clarkvision.com/articles/human-eye/index.html

https://gizmodo.com/what-is-the-resolution-of-the-human-eye-1541242269

Issue 2: Latency

Latency and Motion Sickness – perceptible delay – mismatch – between image movement seen by the eyes compared to motion we feel in our cochlea. In ‘real’ reality the mismatch is essentially imperceptible; but in ‘virtual’ reality the mismatch is significant. This has to do with synesthesia – sensory coordination and integration between two or more senses.

This is the big one for the computer graphics industry. No company wants people buying a $600 VR headset that worked magnificently for five minutes, in the store; but that when they take it home and use it for fifteen minutes, they start violently vomiting. Often in a Mugar Theater people are warned before the film starts, that if they feel like throwing up or passing out they should close their eyes. I know a woman that could not watch Spike Lee movies, because his proclivity to use sweeping camera motions make her sick. This motion sickness has been observed on some amusement park rides.

The company, Magic Leap, has proposed a technology designed to bend light via mirrors in order to control light in such a way that it will trick the inner ear into believing that what the eye sees is what it feels. This is related to methods used to create holographic images. Such mirror technology and technique has been around for a long time. It became quite advanced with the Pepper’s Ghost Illusion, see:

Perhaps solvable by mirrors or holograms. https://makezine.com/projects/diy-hacks-how-tos-the-peppers-ghost-illusion/ [or https://en.wikipedia.org/wiki/Holography]

May require, for speed, Knowm circuits – or Knowm-like circuits – that use memristors to create thermodynamic RAM – Anti-Hebbian and Hebbian (AHaH) processing. https://knowm.org/

Issue 3: Frames Per Second

A frame is a single image. When frames are displayed in rapid succession they create the illusion of motion. This discovery was tantamount in the creation of the ‘moving pictures’ that made Hollywood a household name. Early methods of displaying images in succession involved turning a hand crank. This had the disadvantage of inconsistency, but a distinct advantage in custom speed control. When less speed was required, you could turn the crank slower; when more speed was required you could turn the crank faster.

The early version of moving pictures came in the form of the Kinetoscope, where a single person viewed images through a peephole. The images reside on a reel with perforated edges that where driven by a gear, that was driven by a crank. A perfect example of how a crude idea, once developed can become a grand world changer. The inventor of the Kinetoscope, Thomas Edison (1847-1931) indicated it would take 46 Frames per second to avoid eye strain [Source: https://en.wikipedia.org/wiki/Frame_rate#cite_note-Brownlow-8 ]. Maximum frames per second that the eye can process is unknown. It could possibly exceed 4000 frames per second [Source: https://www.100fps.com/how_many_frames_can_humans_see.htm ].

Issue 4: Bits Per Second

How much information the eye-brain complex can process sets a maximum requirement for display. Once you have a device that can exceed the ability of human capacity to process, there is no need to improve the device for human use. This maximum has been mathematically and demonstrably measured. The evaluated Bits Per Second in the combined field of vision for both eyes to produce human level stereoscopic vision is one billion bits per second. [Source: Hans Moravec (1948-Living), https://www.transhumanist.com/volume1/moravec.htm ]. Incidentally, this bit per second capability of the eyes has been used to extrapolate the information processing capability of the entire human brain.

Issue 5: Vergence-Accommodation Conflict

When you look into the virtual far distance in a VR environment what is virtually distant feels somehow in close proximity in reality. Vergence – movement of your eyes in real life as objects move near or far. Hold a pencil at arms length and your eyes are essentially looking straight. Bring the pencil close to your nose and your eyes begin to cross. Accommodation – as objects come near the eye, in real life, the lens of the eye focuses on the near object and the background becomes progressively less focused – blurry. With VR vergence and accommodation are out-of-sync. VR uses lenses to bend light such that the images appear about a meter away, while objects further or closer can appear smudged. And of course, the entire screen is always – unnaturally – in focus. With extended watching, this leads to eyestrain and headaches. In my opinion, the only way to solve this is via artificial intelligence that can trick the eyes into appropriated movement and focus.

Issue 6: Motion Sensitivity vs. Wide Field of View

Your peripheral vision is quite sensitive to motion. In fact, the wider the field-of-view the greater the motion sensitivity. If the peripheral motions are inaccurately captured the view will become nauseated. Sometime very severely, resulting in bouts of vomiting and dizziness. There is a large range of sensitivity to such affects. Some people get very sick very fast. Peripheral vision is processed in the brain differently than is central vision which makes solving the problem very difficult. Flickering in front of your eyes needs to be ignored; but flickering in the peripheral field must be resolved.

About the Author:

Charles Edward Culpepper, III is a Poet, Philosopher and Futurist who regards employment as a necessary nuisance…

Charles Edward Culpepper, III is a Poet, Philosopher and Futurist who regards employment as a necessary nuisance…