Transhumanism Goes Back To Campus

Jon Nichols / Op Ed

Posted on: October 30, 2015 / Last Modified: August 29, 2022

In the fall of 2013, I lectured to 200 college freshmen on the subject of transhumanism.

Their reaction was one of shock mixed with an apprehension for the years ahead of them. Certainly not my intent and I was fortunately able to bring the discourse back around to one of balance and even, dare I say, optimism. But those students were first semester freshmen. While digital natives, they were also doe-eyed and tattered in the face of that daunting experience that is the first few months of college. It was a gamble that they had any sleep the night before and there I was talking to them about concepts like cybernetics.

Surely, I thought, I would not have the same reception teaching my semester-long course on transhumanism to seniors about to graduate. These would be seasoned veterans after all. Tempered and forged in three and a half years of critical thinking, they would at least be aware of how technology is changing the human experience, if not humanity itself. They would be able to entertain a concept while disagreeing with it. Certainly they would receive the notion of the Singularity with a measured response.

No, it was pretty much the same…only with a bit less crying.

I exaggerate. A little. Let me back up a bit.

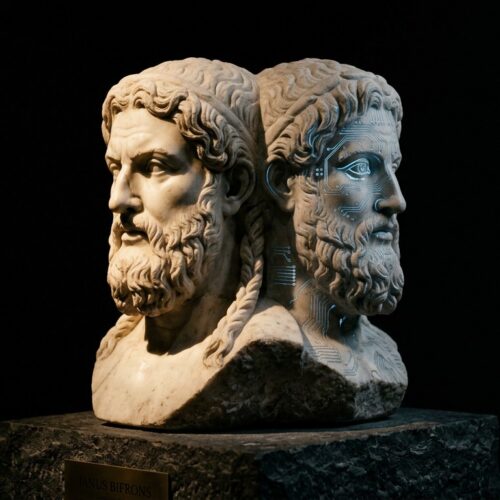

The class started last January. It did indeed cover the technical aspects of transhumanism but the focal point of the course was ethics. Should we or shouldn’t we be doing these things? What are the benefits? What are the consequences? What do you think?

The text for the class was Ray Kurzweil’s The Singularity is Near. I encouraged the class to disagree with Kurzweil’s writings if they chose to. “Goodness knows a great many people already do,” I told them. We held discussions over the reading, peppered with what’s been happening since the publication of Kurzweil’s book, such as Watson’s victory on Jeopardy! and the warnings from Stephen Hawking and Elon Musk over artificial intelligence. The students then wrote a 20 page capstone paper on an ethical question of their choosing and presented that paper to the class.

When we met for the last time, I asked the class for a few final thoughts on transhumanism. These were their responses with names changed to protect privacy.

“I never even knew any of this stuff existed,” said Alyssa, a 22 year-old majoring in athletic training.

I expressed shock: They’ve never known a world without an Internet and they have only the dimmest of memories of life without mobile devices. Why is something like artificial intelligence such a fear leap?

“But computers that can think for themselves? That’s different,” she responded. “And I never even knew what nanotechnology was before taking this class.”

“Many times I left class having an existential crisis,” said Veronica, a soon-to-be school social worker. “Where are things like artificial intelligence taking us? What does it even mean to be human anymore?”

As is the case with many people, not simply college students, it is sometimes easiest to express things in pop culture terms. Lance, a graduating senior who had just accepted a position with a marketing firm, put his change of thoughts this way:

“When I would hear about robots or ‘machines that can think,’ R2-D2 and C-3PO were the first things that would come to my mind,” he said. “I never considered there might one day be an Ultron.”

“There are no strings on me…” I answered, doing my best James Spader and getting a collective shudder from the class.

“Who needs the meatbags?” Lance responded through a laugh, imitating Bender from Futurama.

“I don’t know. I feel hopeful.”

That came from Jessica. She’s a psychology student on her way to grad school.

“If I…God forbid…ever get in a car wreck and lose a limb or something, there may be cybernetics that will let me live the life that I want to,” she said. “If I get cancer, there may be nanotech that will help me get better.”

“At first, my reaction was to reject all of this and say it’s wrong. But this is happening,” said Kate, a major in mass communications. “We’re going to have devices that are autonomous and self-aware. We need to deal with it.”

My interest piqued, I asked Kate just what she was proposing.

“Education,” she answered simply. “People need to know about things like AI. And as we develop super AI, we need to do so with our own sense of ethics in mind. We need to give it a moral compass.”

One student named Matt reminded us of Rev. Christopher Benek, a Presbyterian pastor who wrote an op/ed piece about AI. Benek asserted that AI could “participate in Christ’s redemptive purposes” and “help to make the world a better place.”

“But I don’t believe in god and neither do a lot of other folks,” Veronica said. “So I have to place my faith in people. And I don’t know if we can make the right decisions with this stuff.”

“Then we will need education in ethics just as much as our devices will,” Kate said. “None of the technology that we’ve talked about is really good or bad. It depends what we decide to do with it.”

Kate stole my closing line. She really did. While there were undoubtedly students from the class who will be just fine if they never hear the terms “nanotechnology” or “genetic engineering” again, Kate got the takeaway if there indeed was any single one. Whether transhumanism results in a utopia, a dystopia, or the more likely muddy middle, it will not be due to the technology itself but rather it will result from our choices as a species.

After seeing the work of my students, I am left with hope those choices will be good ones.

About the Author:

Jon Nichols is a science fiction writer who blogs about transhumanism and other topics at Esoteric Synaptic Events. He teaches English and Humanities at a small Midwestern university.

Jon Nichols is a science fiction writer who blogs about transhumanism and other topics at Esoteric Synaptic Events. He teaches English and Humanities at a small Midwestern university.

Related articles

- Transhumanism Goes to Campus

- Transhuman: Titus Nachbauer’s Short Doc on Transhumanism

- A Transhumanist Manifesto

- Hamlet’s Transhumanist Dilemma: Will Technology Replace Biology?

- Zoltan Istvan: The Transhumanist Wager Is A Choice We’ll All Have To Make