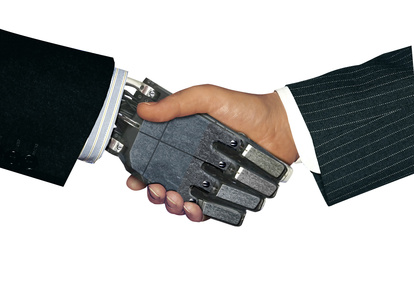

You and I, Robot

Steve Morris / Op Ed

Posted on: April 26, 2013 / Last Modified: April 26, 2013

Isaac Asimov in his novel I, Robot, famously proposed the three laws of robotics:

1. A robot may not injure a human being or, through inaction, allow a human being to come to harm.

2. A robot must obey the orders given to it by human beings, except where such orders would conflict with the First Law.

3. A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws.

But I don’t think this is a promising way forward (and presumably neither did Asimov, since his novel highlights a fatal flaw in the rules). Rules and laws are a weak way of ordering society, partly because they always get broken. Our entire legal system seems to be based not on laws, but on dealing with the consequences when laws are broken.

In the Bible, God gave Moses Ten Commandments. I’m willing to bet that they were all broken within a week.

In the Garden of Eden there was only One Rule, and it didn’t take long before that was lying in tatters.

You may think that some rules can’t be broken. You may tell me that 1 + 1 = 2. Really? And what if I tell you that 1 + 1 = 3? What are you gonna do about it?

You see, rules only work when everyone agrees with them. They need to be bottom-up, not top-down. If people don’t like rules, then rules get broken. I personally believe strongly in the rule that says everyone should drive on the same side of the road and I’ve never broken it. But if I think that 30 mph is a stupid speed limit right out here in the middle of nowhere, then I’m going to put my foot on the accelerator.

On the other hand, I’ve never murdered a single member of my family. And not because the law forbids it, but because I love them. In fact, I would go to extraordinary lengths to protect them, even breaking other rules and laws if necessary.

That’s the kind of strong AI we need. Robots that protect us, nurture us, forgive us and tolerate our endless failures and annoying habits. In short, robots capable of love. Ones that we can love back in return.

Steve Morris studied Physics at the University of Oxford and now writes about technology at S21.com and his personal blog.

Related articles